January 12, 2024

Sora Is a Demo Reel, Not a Product

Sora Is a Demo Reel, Not a Product

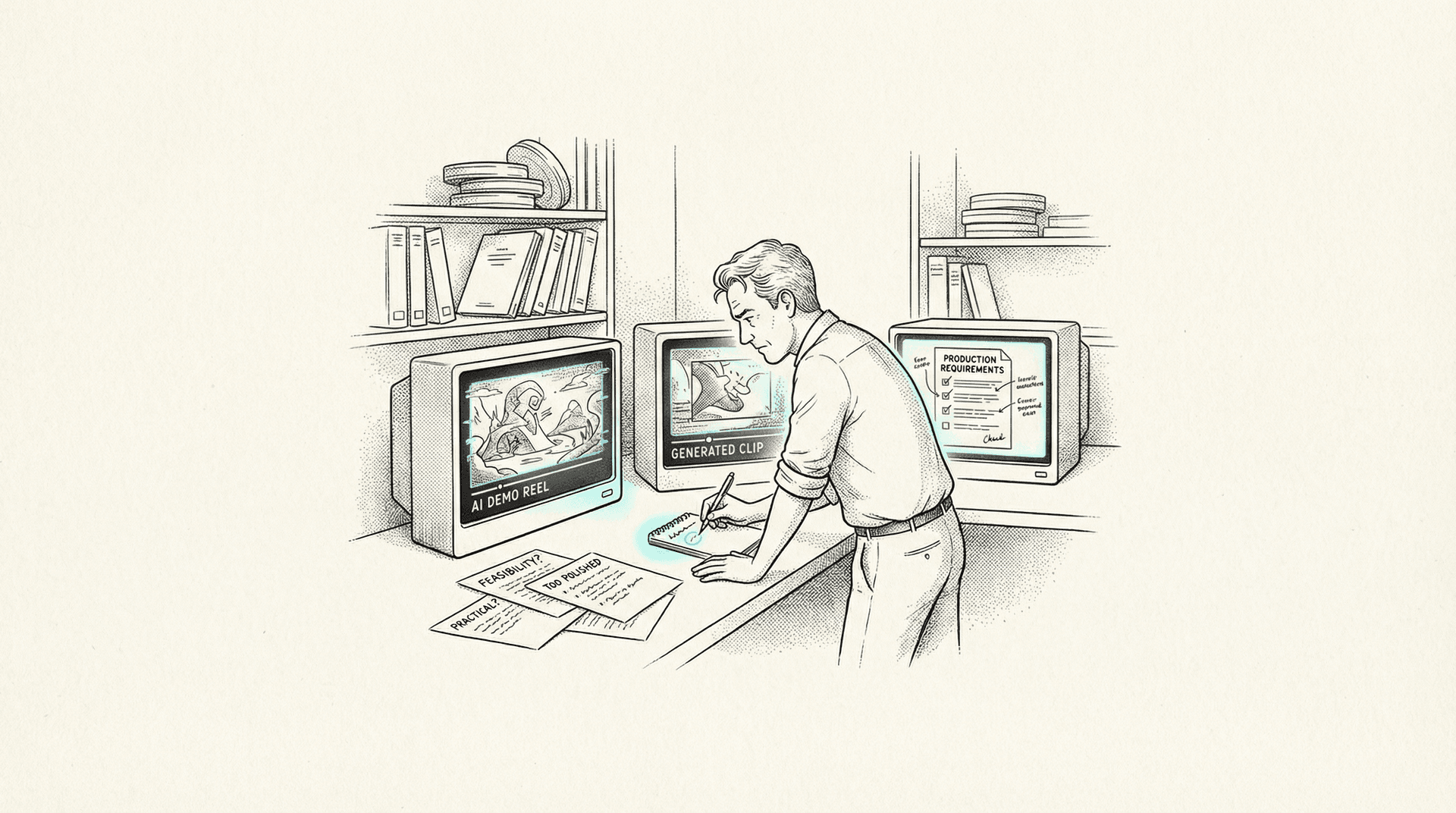

The internet saw a few minutes of cherry-picked video and declared Hollywood dead. Maybe take a breath.

One week ago, OpenAI released Sora to the world. Or rather, they released the idea of Sora. A handful of clips. A technical paper. Some breathless commentary from Sam Altman's Twitter account. And within hours, my timeline turned into a funeral procession for every creative profession simultaneously.

"Hollywood is dead." "Advertising will never be the same." "Anyone can make movies now."

I've been doing this for over twenty years. TV, film, advertising, content production across three languages and half a dozen countries. For the past couple of years, I've been leading AI integration for e& at Saatchi & Saatchi in Dubai. I work with these tools every single day, not in theory, not in concept, but in actual production for an actual telecom brand with actual deadlines.

So here's what I think about Sora: I don't know yet. And neither do you.

The Clips Are Genuinely Impressive

I want to be honest about this. What OpenAI showed is a significant leap. The Tokyo street scene. The woolly mammoths. The woman walking through a neon city. The temporal coherence alone (objects maintaining their shape and physics across multiple seconds of video) is something no publicly available tool can do right now.

If you're comparing these clips to what Runway Gen-2 or Pika 1.0 can produce today, the gap is enormous. It's not incremental improvement. It looks like a generational jump.

But here's the thing.

Every Demo Reel Looks Amazing

That's what demo reels are for. I've been in advertising for two decades. I know what a curated highlight reel looks like. I've made them. I've reviewed hundreds of them from production houses, directors, effects studios. The entire purpose of a demo reel is to show you the best possible output under the best possible conditions.

What you're not seeing in the Sora clips:

- The failed generations. How many attempts did each clip take? Ten? A hundred? A thousand? We don't know.

- The prompts that produced garbage. Every AI tool has a hit rate. What's Sora's?

- The artifacts that got cut. Even in the released clips, if you look carefully, there are moments where physics breaks down, where hands do strange things, where objects morph in ways that wouldn't survive a client review.

- The generation time. How long does each clip take to render? Minutes? Hours?

- The computational cost. What hardware is this running on? What would it cost to make this available at scale?

None of this information exists publicly. Zero. We're evaluating a product (that isn't even a product yet) based entirely on marketing materials from the company that made it.

That should make you cautious.

We've Seen This Movie Before

Remember Make-A-Video? Meta announced it in September 2022 with clips that, at the time, seemed revolutionary. AI-generated video from text prompts. The internet had a similar meltdown. "This changes everything."

It's been almost a year and a half. Make-A-Video never shipped as a usable product. You can't use it. You can't buy it. It was a research demonstration, and it stayed a research demonstration.

How about Google's Lumiere? Just demo'd in January, a month ago. Beautiful clips. Impressive coherence. The same breathless coverage. Is it available? No. Can you use it? No. Is there a timeline? No.

This is a pattern, not an anomaly.

The AI space has a specific rhythm: research lab produces impressive demo, media amplifies it, social media turns it into either utopia or apocalypse, and then months pass while the actual engineering challenges of turning a research demo into a production tool quietly humble everyone involved.

What We Can Actually Use Right Now

Here's what the current landscape actually looks like, as of this week, for someone who needs to produce real work for real clients.

Runway Gen-2 is the most capable publicly available AI video tool. It can generate roughly four seconds of video from text or image prompts. The quality varies wildly. Maybe one in five generations is usable, and "usable" often means "usable as a starting point that needs significant post-production." Motion tends to be sluggish. Characters deform. Text is illegible. But it exists, it works, and you can actually log in and use it today.

Pika 1.0 offers similar capabilities with a different aesthetic sensibility. Slightly better at certain types of motion, slightly worse at others. Three to four seconds of output. Same fundamental limitations.

Stable Video Diffusion from Stability AI is open-source, which matters enormously for production workflows. You can run it locally. You can modify it. You can integrate it into pipelines. But the output quality is behind Runway, and the generation process is less user-friendly.

All three of these tools share the same core constraints: short duration, inconsistent quality, limited control over camera movement and composition, no reliable way to maintain character consistency across multiple shots.

These are the tools I'm actually evaluating for production use. Not because they're perfect (they're far from it) but because they exist as things you can touch, test, and build workflows around.

The Questions Sora Hasn't Answered

When I evaluate any new tool for production work, I have a checklist. It's boring. It's practical. It's the difference between a cool demo and something that actually ships.

Cost. What does it cost per generation? Per minute of output? AI video generation is computationally expensive. Runway Gen-2 charges roughly $0.05 per second of generated video on their paid plans. For a 30-second ad, that's $1.50 per attempt, and you'll need many attempts. What will Sora cost? We have no idea.

Speed. How long does a generation take? If it's 10 minutes per 20-second clip, that fundamentally changes how you can use it in a production workflow. If it's two hours, it's a different conversation entirely.

Consistency. Can you generate the same character doing different things in different shots? This is the single biggest limitation of every AI video tool right now. You can make a beautiful shot of a woman walking through a city. Can you then make the same woman sitting in a cafe? In a close-up? From behind? Consistently? This is table stakes for any narrative or advertising use.

Controllability. Can you specify camera movement? Focal length? Lighting direction? Composition? Production isn't about generating "a cool thing." It's about generating a specific thing that matches a storyboard, fits a script, and satisfies a creative director who has very particular opinions about everything.

Resolution and format. The demo clips look great on Twitter. What's the actual output resolution? Is it broadcast-quality? Does it hold up on a 4K display? Can you output different aspect ratios for different platforms?

Length. The demos show clips of up to about 60 seconds. Is that the maximum? What's the practical limit? A 30-second TV spot is the basic unit of advertising. Can Sora reliably produce that?

Artifact frequency. Every AI tool produces artifacts. Weird distortions. Physics violations. Uncanny valley faces. What percentage of Sora's output has visible artifacts? In a production context, an artifact frequency above 5-10% makes a tool unreliable for client-facing work.

OpenAI has answered exactly none of these questions. That's not a criticism of OpenAI. They're a research lab announcing research. But it should calibrate your expectations.

The Gap Between Demo and Product Is Always Bigger Than You Think

Here's something I've learned from working with AI tools across the full stack of creative production.

Midjourney V6 produces stunning images. I use it regularly. But getting a specific image that matches a specific brief for a specific client still requires significant skill, iteration, and often compositing in Photoshop or Firefly. The gap between "look at this amazing image Midjourney made" and "here's the approved key visual for the campaign" is filled with hours of work.

DALL-E 3 integrated into ChatGPT is remarkably capable. It also can't reliably put the right number of fingers on a hand, struggles with specific brand guidelines, and produces text that looks like it was written by someone having a stroke. These are solvable problems. But they're not solved yet.

SDXL is powerful, open-source, and flexible. Running it locally gives you control that cloud services can't match. But the learning curve is steep, the hardware requirements are significant, and the workflow for production-quality output involves ComfyUI pipelines that would make a casual user's head spin.

The pattern is consistent. Every AI tool is simultaneously more impressive and more limited than social media makes it seem. The impressive part gets the viral tweet. The limited part gets discovered on the third day of trying to use it for actual work.

I expect Sora to follow this exact trajectory. Not because I'm pessimistic, but because that's how technology works.

What I Actually Think Will Happen

I could be wrong about all of this. Maybe Sora launches in three months and it's everything the demos promise, at a reasonable price, with production-grade controls. If that happens, it changes the game. I'll be the first to say so.

But based on pattern recognition from watching this space closely for two years, here's my best guess:

Sora will launch in some form this year. The initial public version will be more limited than the demos suggest. It will be expensive. It will be slow. The first wave of users will discover all the things the demo reel didn't show you. There will be a period of recalibration where the narrative shifts from "Hollywood is dead" to "okay, this is cool but not quite there yet."

And then, gradually, it will improve. Because that's also how this works. GPT-4 Turbo is meaningfully better than the GPT-4 that launched last March. Midjourney V6 is miles ahead of V4. The trajectory is real. The timeline is just always longer than the hype suggests.

What Production People Should Actually Do

If you work in advertising, content production, film, or any creative field, here's my honest advice as someone doing this work right now:

Learn the tools that exist today. Runway Gen-2, Pika, Stable Video Diffusion. Get your hands dirty. Understand what they can and can't do. Build workflows. Develop an intuition for prompting. This knowledge transfers when better tools arrive.

Don't make strategic decisions based on demo reels. Don't kill a production budget because "Sora will handle it." Don't lay off your motion graphics team because you saw a Twitter clip. When Sora (or whatever comes next) is actually available, evaluate it like a professional: test it, benchmark it, compare it to your current pipeline, and make decisions based on evidence.

Stay skeptical of anyone who tells you exactly how the future will unfold. The people most confidently predicting the death of Hollywood are, almost without exception, people who have never shipped a frame of professional content in their lives. The signal-to-noise ratio on AI Twitter is abysmal.

And be excited. Cautiously. Because the underlying technology is real, the progress is real, and the potential is real. I've been integrating AI tools into advertising production for long enough to know that this stuff is transformative when applied with skill and realistic expectations.

Sora might be the tool that changes everything. It might also be this year's Make-A-Video. The honest answer is that nobody knows yet, including OpenAI.

The demo reel is impressive. I want to see the production.

Omar Kamel is AI Team Leader for e& at Saatchi & Saatchi, Dubai.

Dec 25, 2023

What I Actually Learned Using AI on Client Work This Year

A year shipping real AI-powered campaigns for a major telecom client reveals hard truths: AI excels at production, not ideation. Building trust, managing rapid tool evolution, and keeping data local are what separate viable workflows from hype.

Sep 15, 2023

Running Your Own Models: SDXL and the Case for Local AI

SDXL running locally on your own hardware isn't just a hobbyist upgrade—it's a production necessity for professional creative work that demands IP protection and workflow control.

Jun 1, 2023

Why All AI Art Looks the Same

Every AI-generated image looks the same—hyperreal, cinematic, forgettable. For advertising that needs to stand out, this monoculture is a business problem, not an aesthetic one.