December 25, 2023

What I Actually Learned Using AI on Client Work This Year

A year-end honest accounting from someone who shipped real campaigns with these tools.

2023 was the year AI image generation went from party trick to production tool. I know because I spent it doing the work. Not tweeting about the work. Not posting LinkedIn carousels about "10 ways AI will transform your brand." Actually shipping creative for a major telecom client with these tools in the pipeline, every day, under real deadlines with real budgets and real consequences for getting it wrong.

I recently took on the role of AI Team Leader for e& (formerly Etisalat) at Saatchi & Saatchi in Dubai. Before this, I spent years in content creation, TV, film, and advertising production. I've been integrating AI into professional creative workflows since these tools were barely functional. This article is what I actually learned this year. Not predictions. Not hype. Lessons, earned the hard way.

AI Is Better at Production Than Creation

This is the single most important thing I can tell you, and the thing most AI evangelists get backwards.

The tools are spectacular at batch work. Resizing assets across formats, generating variations on an approved concept, producing fifty versions of a social media post with different copy overlays. That kind of production grunt work used to eat days. Now it eats hours.

Where they fall apart is the part everyone wants them to be good at: original creative ideation. I've watched people feed briefs into ChatGPT and Claude 2 expecting breakthrough concepts, and what comes back is competent mediocrity. It reads like a B-minus strategy deck. The ideas aren't bad, exactly. They're just obvious. They're the first thing anyone in the room would have thought of.

The real value lives in the middle of the pipeline. Give AI a strong concept that a human creative director developed, and then let it execute, iterate, and scale that concept across deliverables. That's where time collapses. That's where budgets stretch. Trying to replace the ideation itself is like buying a Formula 1 engine and bolting it onto a shopping cart. The power is real, but it's pointed at the wrong problem.

Client Trust Is Earned Slowly

Here's something nobody talks about at AI conferences: clients are scared.

Not of being replaced. They're scared of looking stupid. They're scared that an AI-generated asset will go out with six fingers on a hand, or with text that says "Etisalet" instead of "Etisalat," or with a cultural detail that's just wrong enough to become a meme. They're scared because they've seen all those viral posts about AI failures, and they do not want to be the next one.

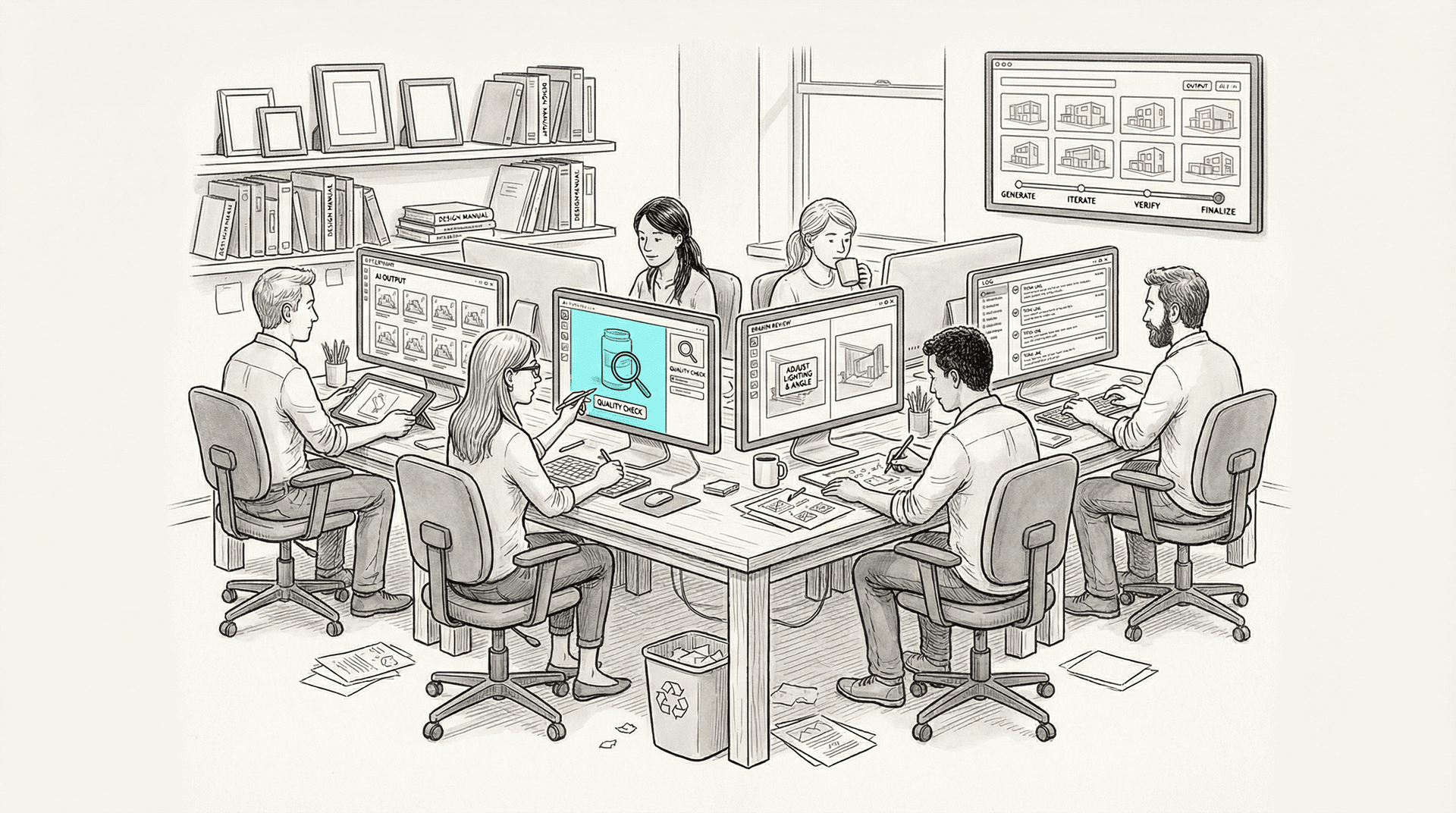

Earning trust means showing process. Every time we present AI-assisted work to e&, we walk through the pipeline. Here's the base generation. Here's the human refinement. Here's the quality control checkpoint. Here's where a real retoucher made corrections. The client needs to see that AI is a tool in a professional workflow, not a magic box that spits out finished work.

It also means managing expectations honestly. When a client asks "can AI do this?" the correct answer is often "yes, but not at the quality level you need, so here's what we'll do instead." That kind of honesty builds more trust than promising the moon and delivering cheese.

The Tools Changed Faster Than Anyone Could Track

I'm going to level with you: keeping up with the tooling in 2023 felt like trying to read a book while someone kept rewriting the chapters.

In January, Stable Diffusion 1.5 was the workhorse for local generation. By July, SDXL had dropped and made everything before it look primitive. Midjourney went from V4 to V5, then V5.1, then V5.2, and just weeks ago released the V6 alpha that finally handles text in images (sort of). DALL-E 2 was the name everyone knew in January; by October, DALL-E 3 arrived integrated into ChatGPT and changed the conversation entirely. Adobe Firefly went from announcement to actual shipping product. Pika 1.0 launched in November. Stable Video Diffusion landed the same month. Runway Gen-2 kept shipping updates all year.

For my team, this created a real operational problem. You can't build stable production workflows on top of tools that fundamentally change every six weeks. We'd get a pipeline dialed in with Stable Diffusion 1.5 and custom LoRAs, and then SDXL would drop and those LoRAs wouldn't transfer. We'd get comfortable with Midjourney V5.2's aesthetic, and then V6 alpha would shift the entire output style.

The lesson: build workflows around principles, not specific model versions. Our ComfyUI pipelines are modular. Swapping a checkpoint is a node change, not a rebuild. That modularity saved us multiple times this year.

Local Generation Became Non-Negotiable

This is the one that surprised me most, and it shouldn't have.

When you're working on a major telecom account, client IP is sacred. Brand guidelines, unreleased campaign concepts, product imagery before launch. None of that can go through a third-party API where you don't control the data.

Early in the year, we tried working with cloud-based generation for everything. It was faster to set up, and the quality from Midjourney was hard to beat. But then legal got involved (as legal does), and the conversation got real very quickly. Where does the data go? Who else can see the prompts? Are these images being used to train future models? With Midjourney's default public gallery, the answer to that last question was a definitive yes. That's a dealbreaker for unreleased campaign work.

So we went local. SDXL running on our own hardware through Automatic1111 first, then increasingly through ComfyUI as the workflows got more complex. ControlNet for compositional control. Custom LoRAs trained on brand-approved visual styles. Everything stays on our machines.

It's slower. It's more work to maintain. The output quality, out of the box, often trails what Midjourney can produce. But the data never leaves the building, and that's what makes the whole operation possible at the enterprise level. Anyone telling you that cloud-only AI generation is fine for serious client work either doesn't understand IP law or doesn't have serious clients.

The "Anyone Can Do It" Myth Collapsed

Early in 2023, the prevailing narrative was that AI democratized creativity. Anyone can make beautiful images now. Prompting is a skill, but it's an accessible skill. The barrier to entry has been eliminated.

By December, that narrative looks naive.

Yes, anyone can generate a cool image in Midjourney. That part is true. What they can't do is generate a cool image that matches a client's brand guidelines, sits correctly in a predefined layout, works across twelve aspect ratios, maintains consistency with the other forty-seven assets in the campaign, features a product that looks exactly like the actual product, and passes legal review.

Production-quality output requires production-quality skills. You need to understand ControlNet conditioning if you want compositional control. You need to know how to train and apply LoRAs if you want style consistency. You need to understand inpainting workflows for surgical corrections. You need color science fundamentals to match brand palettes. You need to understand aspect ratios, bleed, and safe zones for print and digital specifications.

The gap between "I made a cool picture" and "I produced a campaign-ready asset" is the same gap that's always existed between amateur and professional work. The tools changed. The gap didn't.

Arabic Text and Gulf Cultural Representation Are Still Broken

I work in the Gulf. My clients are Gulf companies targeting Gulf audiences. And I can tell you that every major AI image generation model handles Arabic terribly.

Text generation in Arabic? Forget it. DALL-E 3 can now render English text reasonably well. Arabic text comes out as decorative squiggles that look vaguely Arabic to someone who doesn't read Arabic. Midjourney V6 alpha, despite its improvements with English text, produces Arabic that would be comical if it weren't so frustrating. Every single Arabic text element in our deliverables gets done manually in post-production.

Cultural representation is a deeper problem. These models were trained overwhelmingly on Western data. Ask for a "business meeting in Dubai" and you'll get a conference room that could be in Kansas, populated by people who look generically "Middle Eastern" in a way that flattens the actual diversity of the Gulf. Getting Emirati-specific details right (the style of kandura, the correct headwear, the architecture, the landscapes) requires extensive prompt engineering, custom training data, and usually manual correction anyway.

ElevenLabs has made solid progress with Arabic voice synthesis, and HeyGen handles Arabic lip-sync reasonably well for avatar work. But on the visual generation side, if you're producing content for Arabic-speaking markets, plan to spend significant time on cultural quality control that the models simply can't handle yet.

This isn't just a technical limitation. It reflects whose culture the training data centers, and whose it ignores. That's a conversation the industry needs to have honestly.

AI Didn't Replace Anyone on My Team. It Changed What Everyone Does.

I manage a team of creatives, and not a single person lost their job to AI in 2023. I need to say that clearly because the fear is real.

What happened instead is more interesting and more nuanced. Everyone's job description shifted.

Our retouchers spend less time on basic compositing and more time on fine corrections that AI can't handle. Our designers spend less time generating visual options and more time curating, refining, and ensuring brand consistency. Our copywriters spend less time on first drafts and more time on strategy, tone, and cultural adaptation. I spend less time in the production weeds and more time building workflows, evaluating new tools, and training the team.

The work got more interesting, in my opinion. The tedious parts compressed. The human parts (judgment, taste, cultural knowledge, client relationship management) expanded.

But I want to be honest about the tension. If AI keeps improving at this rate, the question of headcount will come up. Not because the technology demands it, but because someone in a finance department will look at productivity numbers and do the math. The defense against that isn't pretending it won't happen. It's making sure your team's human skills are so obviously valuable that the math never works out in favor of cutting them.

The Costs Are Real and Not Trivial

The fantasy version of AI in production is that it saves money from day one. The reality is different.

Here's a partial list of what we spent money on this year: GPU hardware for local generation. Midjourney subscriptions for the team. ChatGPT Plus and Claude Pro subscriptions. Runway Gen-2 credits. ElevenLabs voice generation credits. Cloud compute for training custom models. Storage for model files, assets, and project archives. And then the big one that never makes it onto the spreadsheet: time. Training time. Every hour someone on my team spent learning ComfyUI or debugging a ControlNet workflow or figuring out why their LoRA training collapsed at epoch 12 is an hour they weren't producing billable work.

The ROI is real, but it's not instant. We hit a tipping point around month six or seven where the team's proficiency was high enough that the speed gains outweighed the investment. Before that, it was an act of faith and forward planning.

If someone tells you AI will save you money in the first quarter of adoption, they're selling something. The payoff comes later, and only if you invest in training and infrastructure properly.

Looking Ahead (With Appropriate Uncertainty)

I'm not going to make grand predictions about 2024. The pace of change in 2023 made fools of everyone who tried to predict the next quarter, let alone the next year.

What I'll say is this: the foundation is laid. The tools are real. The workflows are maturing. The team knows what it's doing. 2023 was the year of building the plane while flying it. If 2024 lets us fly the plane we've already built, with better engines and fewer turbulence, that's more than enough.

But I suspect it won't be that simple. Video generation is clearly the next frontier. Pika, Stability, and Runway are all pushing hard. If video generation reaches the quality and controllability that image generation reached this year, the production landscape shifts again. Whether the industry is ready for that shift is an open question.

What I'm certain about is this: the gap between people who actually use these tools in production and people who merely talk about them will keep widening. 2023 was the year that gap became visible. 2024 is the year it starts to matter.

Omar Kamel is AI Team Leader for e& at Saatchi & Saatchi, Dubai.

Sep 15, 2023

Running Your Own Models: SDXL and the Case for Local AI

SDXL running locally on your own hardware isn't just a hobbyist upgrade—it's a production necessity for professional creative work that demands IP protection and workflow control.

Jun 1, 2023

Why All AI Art Looks the Same

Every AI-generated image looks the same—hyperreal, cinematic, forgettable. For advertising that needs to stand out, this monoculture is a business problem, not an aesthetic one.

Mar 1, 2023

The Morning After: AI Just Got Good Enough to Matter

Four major AI releases in a single week—Midjourney V5, GPT-4, Claude, and Runway Gen-1—represent a threshold moment. For production professionals, the question isn't whether these tools matter anymore. It's how fast you adapt.