November 14, 2024

AI Video Got Serious This Year

2024 was the year AI video stopped being a novelty and started being a tool. Here's what that actually looks like from the production side.

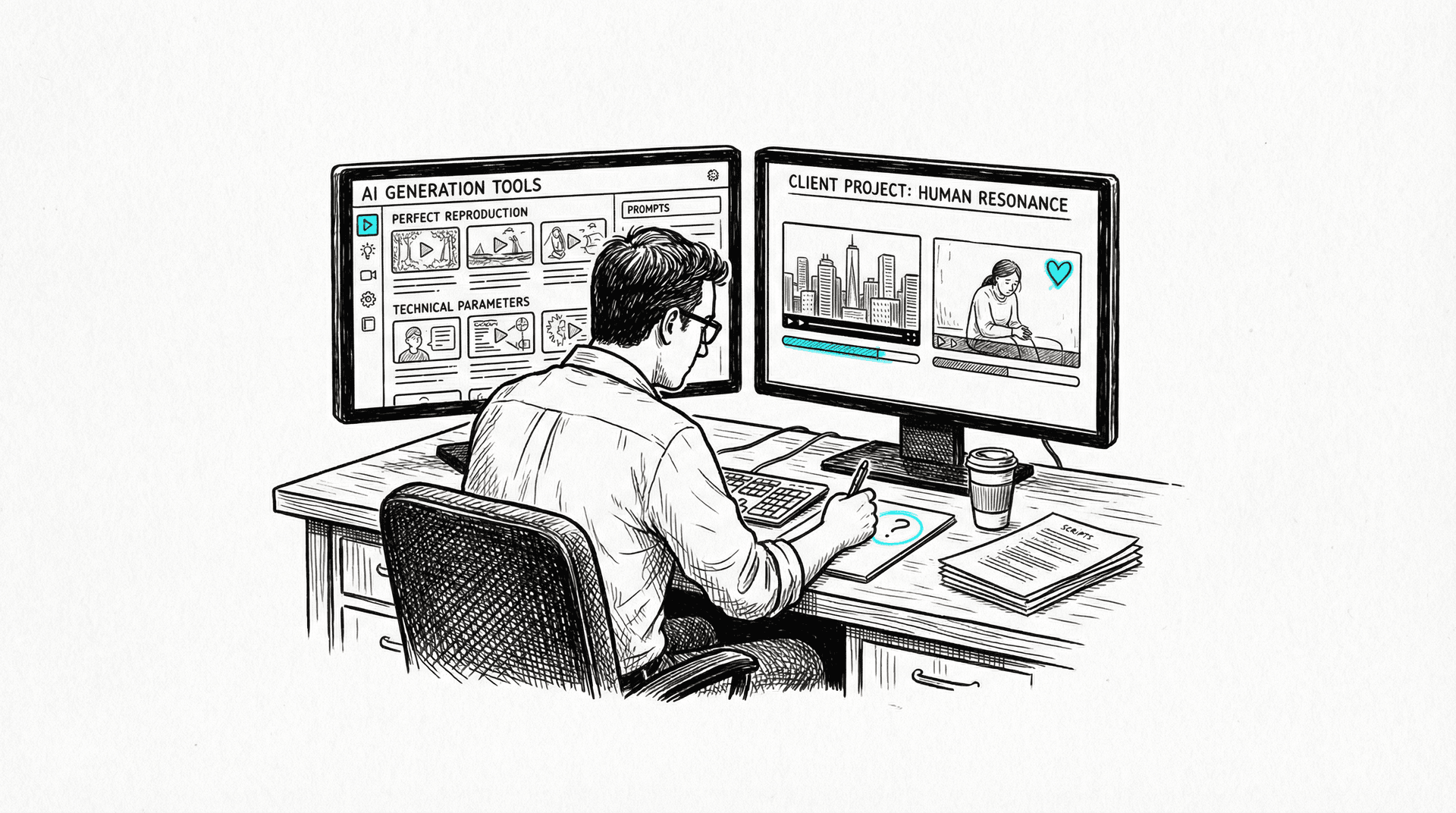

I've spent most of this year testing every AI video tool the moment it launches. Not reviewing them. Using them. Putting them into actual production workflows for client work at an advertising agency. That distinction matters, because the gap between "this looks cool on Twitter" and "I can put this in front of a client" is enormous. And in 2024, for the first time, some of these tools started crossing that gap.

Not all of them. Not for everything. But the shift is real, and if you work in production, you need to understand what changed and what didn't.

The Starting Gun

The year had a clear inflection point: June 2024.

Runway launched Gen-3 Alpha, and it was the first AI video tool that made me think "I can use this on a project." Not "this is impressive for AI." Not "this will be amazing in two years." I can use this. Now. The motion quality, the coherence between frames, the ability to actually direct the camera. It felt like a generational leap from Gen-2, which had been interesting but fundamentally unreliable.

Within weeks, Kling 1.0 dropped out of China and immediately made things interesting. Where Runway excelled at cinematic aesthetics, Kling understood physics in a way that felt different. Objects had weight. Fabric moved correctly. Water behaved like water. It wasn't perfect, but it was solving different problems than Runway, which meant production teams suddenly had options.

Luma Dream Machine joined the race. MiniMax (also known as Hailuo Video) emerged with surprisingly capable output. Pika evolved to version 1.5 with better motion control. The competitive pressure was visible in real time: each tool shipping updates faster because the others were shipping updates faster.

Six months ago, we had one semi-usable AI video tool. Now we have five or six fighting for the same market. That's not hype. That's an industry forming.

What You Can Actually Use Today

Here's what I've found works in real production, right now, in November 2024.

Social content and short-form digital. This is the sweet spot. A 3-to-6 second clip for an Instagram story, a motion background for a product post, an animated reveal for a campaign launch. The duration is short enough that AI video's limitations (more on those in a minute) don't have time to surface. The quality bar for social is high but forgiving in the right ways. Nobody expects a 5-second story clip to look like a Ridley Scott film.

Product shots and reveals. Give Runway or Kling a well-crafted still image of a product and ask it to add subtle motion (a slow rotation, particles in the background, a liquid pour) and you get something that would have taken a motion graphics artist half a day. It's not replacing that artist. It's giving the team more options in less time.

B-roll and motion backgrounds. Need abstract flowing shapes for a presentation? Atmospheric footage for a mood board? A slow pan across a stylized landscape for a pitch deck? AI video handles this well because it doesn't need to be specific. It just needs to feel right.

Pre-visualization. This is the use case that excites me most. Before you commit budget to a shoot, you can now generate rough versions of complex shots to show a client what you're envisioning. It's not the final product. It's a communication tool. And it saves a staggering amount of back-and-forth.

Motion graphics enhancement. Take existing motion graphics and use AI to add organic elements: realistic smoke, water, fabric movement. This hybrid approach, combining traditional mograph with AI-generated elements, is where some of the most convincing work is happening.

What Still Doesn't Work

And here's the part that AI evangelists on social media keep skipping over.

Dialogue and performance. Try to generate a person delivering a line of dialogue with appropriate facial expressions, lip sync, and emotional nuance. It falls apart instantly. The uncanny valley isn't just present; it's a chasm. We are nowhere near replacing actors for performance work. Not close.

Hand interactions. The AI video equivalent of the six-fingered hand problem from image generation. Characters picking up objects, typing, shaking hands, gesturing naturally. Still a mess. Getting better, but not production-ready.

Text in frame. Need a character to hold up a sign? Need text on a screen within the shot? Good luck. AI video tools hallucinate text with the same enthusiasm that image tools do. Every frame is a new creative interpretation of what letters might look like.

Long-form coherence. Anything beyond about 10 seconds starts to drift. Characters change appearance. Physics get weird. The camera develops opinions of its own. You can extend clips, but each extension is a roll of the dice. For broadcast or long-form content, this is a dealbreaker.

Broadcast hero content. The centerpiece ad. The emotional apex of a campaign. The thing that runs during the Super Bowl. AI video is not ready for this. And Coca-Cola just proved it.

The Coca-Cola Cautionary Tale

Let's talk about that Coca-Cola holiday ad.

A few weeks ago, Coca-Cola released an AI-generated version of their classic "Holidays Are Coming" Christmas ad. The original, with its fleet of illuminated trucks rolling through a snowy town, is one of the most beloved ads in history. Coca-Cola decided to recreate it with AI.

Technically, it's competent. The trucks look like trucks. The snow looks like snow. The lights glow. The framing references the original. If you showed it to someone who'd never seen the original, they might say it looks fine.

But the reaction has been savage. And justified.

The ad is emotionally hollow. There's something about the way the people in the ad move (or don't move) that registers as wrong, even to viewers who can't articulate why. The warmth that made the original iconic isn't there. It's not in the script or the music or the color grading. It was in the humanity of the footage, real trucks, real snow, real breath in cold air. The AI version is a technical reproduction of the surface without any of the substance underneath.

This matters enormously for our industry. Coca-Cola has infinite budget. They had the best AI tools available. They had time and talent. And they still produced something that made people angry. Not because it was bad AI. Because it was good AI applied to the wrong problem. Some content needs to be made by humans, and the audience can feel the difference even when they can't explain it.

If you're in a client meeting and someone says "can we just do the whole thing with AI?" show them the Coca-Cola ad. Then show them the comments.

The Sora Situation

Nine months ago, OpenAI demoed Sora and the internet lost its collective mind. The demo videos were extraordinary: long, coherent, cinematic footage that seemed to leap ahead of everything else.

Nine months later, it's still not public.

OpenAI keeps promising a release. They've been promising. And while they promise, Runway shipped Gen-3 Alpha. Kling shipped version 1.0 and then 1.5. Luma and MiniMax and Pika all shipped usable products that real people are making real work with.

There's a lesson here about the difference between demos and products. A demo shows you what a model can do in ideal conditions with cherry-picked outputs. A product has to work reliably, at scale, for paying customers, with all the messy reality that implies. Maybe Sora will launch and blow everything else away. Maybe it won't. But right now, the companies that shipped are winning, and the company that demoed is not.

The history of technology is full of incredible demos that never became usable products. I'll get excited about Sora when I can log in and use it.

The Image Side of Things

While video grabbed the headlines, image generation had its own maturation year. And the video progress is built on this foundation.

Flux 1.0 from Black Forest Labs established itself as the new standard for local image generation. Running through ComfyUI, it offers a level of control and quality that makes SDXL feel like a previous era (which, at this pace, it basically is). The ability to run this locally, without API calls, without usage limits, without sending client assets to someone else's server, matters enormously for production work.

Midjourney V6.1 improved consistency and prompt adherence. Still the easiest tool for fast concepting, still the hardest to integrate into a technical pipeline.

The image tools matter for video because the best AI video workflow right now isn't text-to-video. It's image-to-video. You craft a perfect starting frame using Flux or Midjourney, then feed it to Runway or Kling for animation. The quality of your input image determines the ceiling of your video output. Good in, good out. The same principle that's governed production since the invention of the camera.

The 80/20 Reality

Here's what I tell every client and every creative director who asks about AI video: it changes the first 20% and the last 0% of production.

AI video can generate raw material faster than any traditional method. Need ten options for a motion background? Five minutes instead of five hours. Need a rough cut of an impossible camera move to test a concept? Done before lunch. The ideation and raw generation phase has been compressed dramatically.

But everything after that? The editing, the color grading, the compositing, the sound design, the music, the pacing, the storytelling? That's the same work it always was. A great editor spending a day in DaVinci Resolve or Premiere is still the difference between raw AI output and something you'd actually broadcast.

The teams getting the best results right now are not the ones with the best AI tools. They're the ones with strong traditional post-production skills who are adding AI to the front of their pipeline. They know how to take an 80% AI output and push it to 100% with conventional techniques.

If you're building a team for AI-augmented production, hire great editors and compositors first. The AI part is the easy part.

Where This Goes

I don't do predictions. Predictions in AI are a fool's game; the pace of change makes six-month forecasts useless. But I'll note trajectories.

The competition between Runway, Kling, and the rest will push quality up and prices down. That's already happening. The tools that survive will be the ones that solve real production problems, not the ones that generate the most impressive Twitter demos.

The Coca-Cola backlash will make smart brands more careful about where they deploy AI video. That's healthy. Not every problem should be solved with AI, and the market is starting to learn that lesson the hard way.

Control will matter more than raw quality. I don't need AI video to look 5% more photorealistic. I need it to do what I tell it to do, consistently, without hallucinating its own creative vision into my shot. The tools that give me reliable control over camera, motion, and composition will win the production market. The tools that give me beautiful but unpredictable output will remain toys.

And the gap between AI-native content (made entirely with AI) and AI-augmented content (made by humans with AI tools) will become the defining divide in our industry. The former makes Coca-Cola ads that people hate. The latter makes real work better, faster, and more ambitious.

That's the year. AI video got serious. Not finished. Not revolutionary. Serious. Like a junior team member who just started delivering usable work. You still check everything they do. You still need senior people making the real decisions. But for the first time, they're contributing to the project instead of just being a distraction.

That's progress. Real progress. The boring, useful kind.

Omar Kamel is AI Team Leader for e& at Saatchi & Saatchi, Dubai.

Aug 16, 2024

What AI Gets Wrong About the Gulf

The Gulf's most AI-forward region is poorly served by generative tools that butcher Arabic, confuse regional dress codes, and flatten distinct cultural identities into Western defaults. Daily production reveals that cutting-edge AI fails at the basics of the markets betting their future on it.

May 27, 2024

Stability AI Is Imploding. Open Source Will Survive It.

Stability AI's collapse proves the company was never as essential as it seemed. The Stable Diffusion ecosystem—running on thousands of machines worldwide—will outlive the corporation that created it.

Jan 12, 2024

Sora Is a Demo Reel, Not a Product

OpenAI showed impressive Sora clips, but demo reels aren't products. The real questions about cost, speed, consistency, and reliability remain completely unanswered.