April 1, 2026

The Gap Between AI Hype and Production Reality

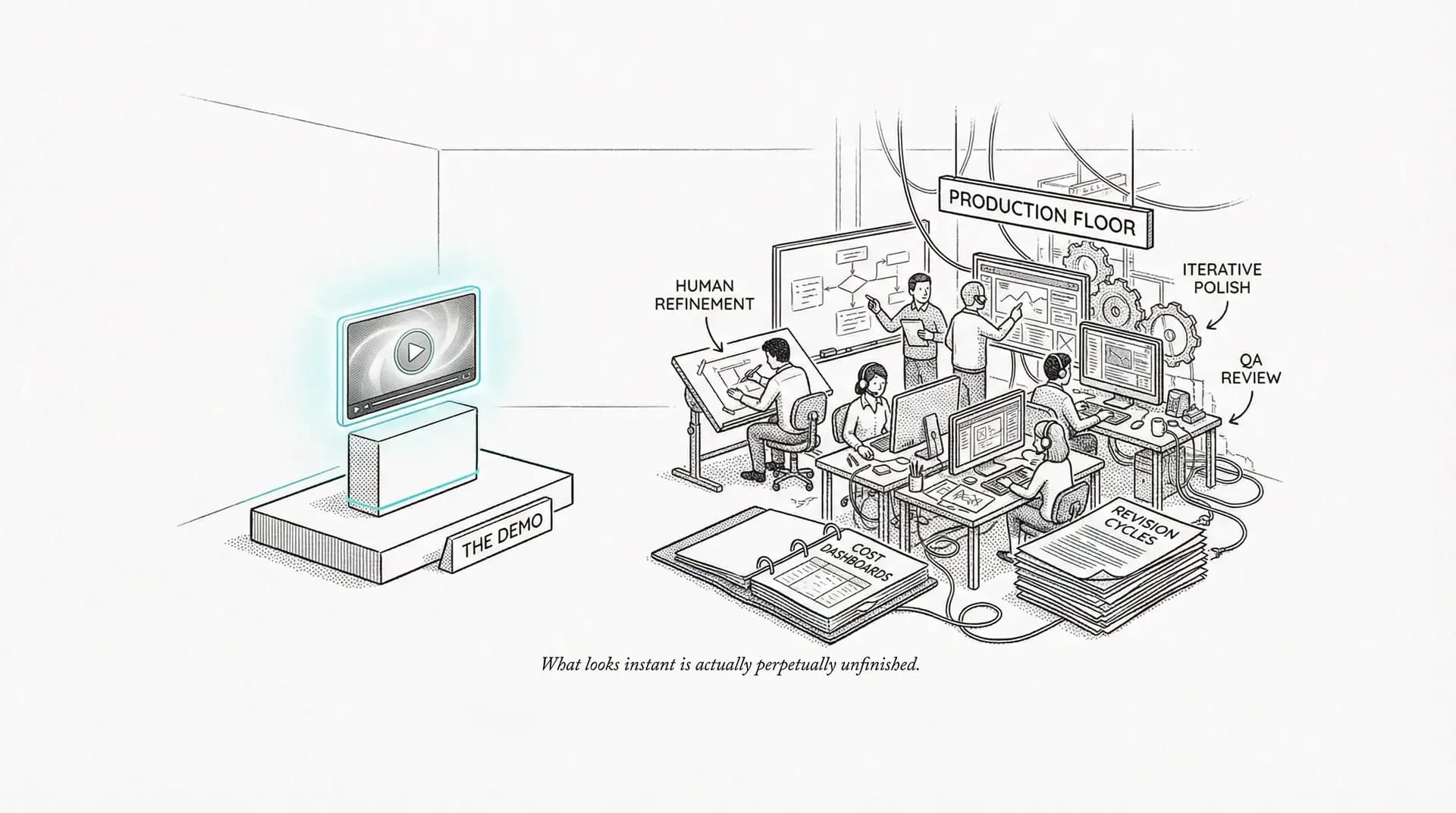

The distance between a demo reel and a deliverable is measured in hours, dollars, and broken promises.

In February 2024, OpenAI showed the world Sora. Sixty seconds of cinematic, physically coherent video generated from text. The internet lost its mind. Hollywood was dead. Advertising was dead. Every visual medium was about to be democratized into oblivion.

In March 2026, OpenAI announced Sora is shutting down. The app closes April 26. The API follows in September.

Between those two dates lies the entire story of AI hype versus production reality. And it's a story worth understanding, because the gap between what AI promises and what it delivers isn't just a matter of timeline. It's a matter of kind.

The Demo-to-Production Gap

Every AI tool in history has looked magical in its demo reel. This is not a coincidence.

When OpenAI unveiled Sora's debut videos, they were cherry-picked from hundreds (possibly thousands) of generations. The clips that showed objects morphing between frames, physics violations, and characters dissolving into visual noise were not included in the presentation. When the tool shipped publicly as Sora Turbo in December 2024, users discovered what "cherry-picked" actually meant: the average generation looked nothing like the highlights reel.

Sora 2 arrived in September 2025, adding synchronized dialogue, sound effects, and improved physical accuracy. It was better. And it was also burning through $4.2 million per day in GPU compute, with each minute of generated video costing approximately $3.80 in inference alone. Users peaked at about a million before declining to under 500,000. The math never worked.

This pattern isn't unique to Sora. It's the industry standard.

The Usual Suspects

Google's AI video tools have followed a consistent playbook: announce research (Lumiere, Veo, Imagen Video) with stunning demo reels at I/O, then either ship a hobbled consumer version months later or fold the capability into a platform where it's hard to access directly. Google's Veo is available through Vertex AI and now integrated into Adobe Firefly's multi-model hub, but the path from "Google I/O demo" to "production tool" was anything but the straight line the announcement implied.

Meta reported that over a million advertisers used its AI creative tools by mid-2025. Impressive, until you learn that most of that usage was automated cropping and resizing, not creative generation. Meta's Advantage+ tools improved performance marketing efficiency, but for brand creative work, the output quality remained what one creative director I know called "clip art with a college education."

Runway has been the most honest player in the space, steadily improving from Gen-2 through Gen-3 Alpha to Gen-4.5, which holds the top benchmark position. But even Runway's own promotional materials created a perception gap. Corridor Digital and other creator studios showed behind-the-scenes workflows where Runway-generated footage required frame-by-frame compositing, rotoscoping, and color work, creating nearly as much post-production work as it saved in production.

Stability AI (the company behind Stable Diffusion, arguably the most important open-source AI model ever created) went through leadership turmoil, layoffs, and financial distress. CEO Emad Mostaque resigned in April 2024. The company that kickstarted the generative AI revolution couldn't sustain a business model around it.

Jasper AI, once valued at $1.5 billion, positioned as the AI replacement for copywriters, went through multiple pivots and significant layoffs as ChatGPT and Claude offered better writing capabilities at lower cost.

Seedance 2.0 from ByteDance offered yet another flavor of the same lesson. The model was technically strong: excellent for animation, stylized content, and text-to-video. Then Hollywood studios including Disney, Netflix, Paramount, and Sony threatened legal action, and ByteDance responded by deploying content filters so aggressive that all realistic human face uploads are now blocked as reference images. A video generation tool that can't take a face reference is, for most advertising purposes, a video generation tool you can't use. The capability didn't degrade. The access to it did. A different kind of hype-to-reality gap: the tool works, but the world around it doesn't let you use it.

The pattern is always the same: a spectacular demo, a breathless valuation, and then the long, unglamorous confrontation with reality, whether that reality is technical, financial, or legal.

The 80/20 Problem

Here's the core truth that every AI production demo carefully avoids: AI gets you 80% of the way in 20% of the time, but the last 20% still takes 80% of the effort.

That last 20% is everything clients pay for: the craft, the polish, the brand compliance, the cultural sensitivity, the legal clearance, the pixel-level precision. It's the difference between a demo and a deliverable. And it requires the same human skills it always did.

A commonly cited figure from production studios: AI-generated creative assets for client-facing work require two to four hours of human refinement per deliverable, compared to four to eight hours for fully manual creation. The savings are real, roughly 50% on production time for certain asset types. But "50% faster" is a long way from "instant," and it comes with new costs that the productivity claims conveniently omit.

The Hidden Costs Nobody Mentions

Compute isn't free. Enterprise-grade AI API costs for agencies running high-volume generation run $10,000-50,000 per month for a mid-size team, according to Digiday and Ad Age reporting. Self-hosted ComfyUI workflows require GPU hardware: an NVIDIA RTX 4090 (the minimum serious production card) costs $1,600+, and video workflows demand even more horsepower.

New roles aren't savings. Agencies have created entirely new positions (AI producers, prompt engineers, creative technologists) at salaries of $80,000-150,000. These aren't replacing existing headcount; they're supplementing it. The traditional creatives, editors, and designers are still here, still necessary, and now also expected to learn AI tools on top of their existing skills.

QA just got harder. AI-generated content requires review for hallucinations, brand safety violations, inadvertent likenesses, cultural insensitivities, and factual errors. Several agencies have created dedicated QA roles specifically for AI output review. That's a new cost center, not a savings line.

Legal review is mandatory. The unresolved copyright landscape (Getty v. Stability AI, the RIAA v. Suno case, the US Copyright Office's evolving guidance, the EU AI Act's transparency requirements) means legal teams need to review AI-generated assets before they ship. That review takes time and costs money.

The iteration tax. Here's one nobody talks about: when AI generates fifty variations in a minute, someone has to review fifty variations. The review and approval process expands to fill the increased output. More options don't always mean faster decisions; they often mean longer debates and more revision cycles.

The Narratives That Didn't Pan Out

"AI Will Replace Creatives"

It hasn't. Through 2025, the creative industry saw layoffs, but the causes were tangled together: post-pandemic economic correction, media spending shifts, consolidation, and genuine AI efficiency gains. Major holding companies (WPP, Publicis, Omnicom, IPG) reduced headcount primarily in operational and support roles, not senior creative positions.

What has happened is subtler and, in some ways, worse: the junior creative pipeline is being squeezed. AI most directly threatens entry-level positions: junior copywriters, production designers, retouchers. These are the exact roles that train the next generation of senior creatives. If you hollow out the bottom of the ladder, you shouldn't be surprised when nobody emerges at the top in ten years.

Freelance rates for commodity creative work (basic illustration, simple copywriting, stock-style photography) have declined. Rates for high-end creative direction and strategic work have remained stable or increased. The bifurcation is real: AI is making mediocre work cheaper and good work more valuable.

"AI Makes Everything Faster"

Sometimes. Not always.

VFX studios reported that AI-assisted compositing sometimes introduced artifacts that took longer to fix than manual work would have. Copywriting teams found that editing AI-generated copy to match a specific brand voice took nearly as long as writing from scratch. Video production teams discovered that generating footage with AI tools, then editing, color-correcting, and compositing it into a coherent narrative was often slower than traditional methods for projects under thirty seconds.

The Nielsen Norman Group published research showing that AI-assisted writing was faster for first drafts, but total time-to-completion (including editing, fact-checking, and revision) was only 10-15% less than fully manual work for professional-quality output. That's useful. It's not the revolution people were promised.

"Anyone Can Use AI"

The democratization was real but limited. AI lowered the floor (anyone can type a prompt and get something) but it didn't raise the ceiling. Professional output still requires professional skills: composition, color theory, typography, art direction, an understanding of camera language, and the editorial judgment to distinguish between a hundred mediocre outputs and the three that actually work.

The most effective AI users in creative agencies aren't the ones who learned prompting; they're experienced professionals who use AI to amplify deep existing skills. The tool is a multiplier. If you multiply zero by ten, you still get zero.

What Actually Delivered

This isn't a hit piece on AI. I use these tools every day and they make me better at my job. The problem isn't the technology; it's the narrative around it. So here's what actually works:

Adobe Generative Fill became a legitimate daily-use production tool. Background extension, object removal, quick compositing, all measurably faster than manual methods, reliably good output, commercially safe. This is what honest AI integration looks like: specific, useful, integrated into existing workflows.

Image generation for concepting compressed the ideation phase. Art directors explore visual directions in hours instead of days. This is a real, documented workflow improvement that every agency I know has adopted.

Kling 3.0 and Runway Gen-4.5 moved AI video from parlor trick to production tool for short-form content. Not for everything, not without post-production, but for social content, product reveals, and digital advertising, the quality is there.

Automated localization (HeyGen for video dubbing, LLMs for copy adaptation, batch processing for format variation) delivered unambiguous time and cost savings for multi-market campaigns.

AI transcription and captioning (Whisper-based solutions, Premiere's auto-captioning, Descript) achieved 95%+ accuracy for clear audio. Small thing, huge time saver.

LLMs for research and first-draft writing are useful when treated as accelerators rather than replacements. Not controversial, not revolutionary, just useful.

Where We Actually Are

Gartner placed generative AI firmly in the "Trough of Disillusionment" in their 2025 Hype Cycle. That's where we are: past the peak of inflated expectations, working through the unglamorous reality of actual integration.

Deloitte found that only 26% of companies were seeing "significant" ROI from their generative AI investments. The World Federation of Advertisers reported that while 80%+ of major advertisers were experimenting with AI, fewer than 20% had integrated it into core production workflows.

Sir John Hegarty, founder of BBH, put it perfectly: "AI is going to make mediocrity faster." He's right. AI produces average work by definition; it's trained on averages. Deviation from the average is what we call creativity, and it remains stubbornly, beautifully human.

The Regional Perspective Nobody's Writing

I work in Dubai. The GCC is one of the most aggressive AI-adopting regions in the world. The UAE has a Minister of State for Artificial Intelligence (the world's first such cabinet position), Abu Dhabi built the Mohamed bin Zayed University of Artificial Intelligence, and the Technology Innovation Institute developed the Falcon LLM. This is not a region that's sitting out the AI wave.

And yet.

The hype-to-reality gap is, if anything, wider here than in the US or Europe. Because most AI tools that power creative production (Midjourney, Flux, Runway, Kling, Suno) were built primarily on Western, English-language data. When I use those tools to produce content for GCC markets, I confront the gap in its most concrete form:

Arabic text rendering is broken across most image generation models. Not "imperfect." Broken. Letters disconnect, direction reverses, diacritics vanish, and the result would embarrass a first-year design student. For a region where Arabic typography is a core element of brand identity, this isn't a minor inconvenience. It's a fundamental limitation that forces manual composition for every Arabic-language deliverable.

Cultural representation defaults to Western norms. Generate "professional woman in office" in Midjourney and you get a white woman in a Western-style blazer. Generate "man in traditional Gulf dress" and most models give you something generic. They can't tell the difference between an Emirati Kandura (collarless, with the tarboosh tassel, minimalist) and a Saudi Thobe (buttoned collar, different embroidery, different cut). Getting that wrong in an ad for an Emirati client isn't a "close enough" situation. It's telling your audience you don't know where they live.

The one notable exception has been Nano Banana Pro (Gemini 3 Pro Image from Google DeepMind), which actually handles GCC cultural distinctions with real accuracy. It became my primary image generation tool precisely because it narrows this cultural gap more than any competitor. But even it doesn't eliminate the need for human review, because cultural sensitivity extends far beyond clothing into social dynamics, gender representation, and religious context.

The AI hype narrative is written from San Francisco and assumes a San Francisco audience. The production reality is that for roughly 80% of the world's markets, these tools require significant adaptation before they produce anything a client would accept.

What Survives the Trough

Gartner's Trough of Disillusionment isn't where ideas go to die. It's where they go to grow up. And what I see emerging from the trough is more interesting than what went in.

The tools that are surviving are the ones that stopped trying to replace human creative work and started trying to accelerate it. ComfyUI never promised to make anyone an artist. It promised a scriptable, reproducible pipeline for image generation, and delivered. Kling 3.0 didn't promise to replace film production. It promised better short-form video with more control, and delivered. ElevenLabs didn't promise to replace voice actors. It promised voice cloning and multilingual dubbing, and delivered.

The common thread: tools that solve specific, well-defined production problems, with honest marketing about what they can and can't do, and sustainable business models that don't require burning millions per day in GPU compute.

Meanwhile, the tools that promised everything (Sora's "imagine anything, generate everything" pitch, Jasper's "AI replaces your copywriter" positioning, the parade of startups claiming to "democratize creativity") are the ones shutting down, pivoting, or slowly deflating.

There's a lesson here that goes beyond AI: honesty is a better product strategy than hype. The tools that told you what they were actually good at earned your trust. The ones that told you they'd change everything earned your skepticism, and then your abandonment.

The Work That Matters

The real story of AI in creative production isn't the story of disruption. It's the story of integration: unglamorous, incremental, and requiring exactly the kind of craft knowledge that the hype narrative said would become obsolete.

The best AI-produced advertising I've seen in the past year wasn't made by people who are good at prompting. It was made by people who are good at making ads, who also happen to know how to use AI tools. The tool amplified their skill. It didn't substitute for it.

Sora promised to change everything. It's shutting down in three weeks. The tools that survived (Kling, Runway, ComfyUI, Flux) survived because they solved real problems for real producers. Not because they promised a future where producers wouldn't be needed.

The hype is over. The work continues. That's actually good news.

Omar Kamel is AI Creative & Production Lead at Optix (Publicis Groupe), Dubai.

Mar 15, 2026

What AI Can and Can't Do in Creative Production Right Now

AI image generation, video, and voice tools are production-ready today—but only for practitioners who understand where they actually deliver versus where they bluff. Here's what actually works on real client projects.

Mar 1, 2026

How AI Pipelines Actually Work in Ad Production

AI doesn't create ad campaigns—it compresses the distance between your concept and a visual others can react to. Here's exactly how production pipelines work, with all the friction.

Nov 19, 2025

The Tools That Earned Their Place

A year-end reckoning of which AI creative tools proved themselves in production and which disappeared. The winners share one trait: they solved real problems instead of chasing hype.