March 15, 2026

What AI Can and Can't Do in Creative Production Right Now

An honest assessment from the production floor, not the demo stage.

I use AI tools every day. I use them to produce real work for real clients with real budgets and real deadlines. I'm not an AI skeptic. I'm an AI practitioner. And precisely because I use these tools daily, I know where they deliver and where they bluff.

What follows is an honest inventory. April 2026. No speculation, no roadmap promises, no "it'll get there soon." What works today, and what doesn't.

What AI Can Do

Generate Production-Quality Still Images

This is AI's most mature capability in creative production, and it's transformative.

My primary tool for production stills is Nano Banana Pro (technically Gemini 3 Pro Image from Google DeepMind, but everyone calls it Nano Banana Pro). It generates up to 4K resolution in various aspect ratios, blends up to 14 images while maintaining consistency of up to 5 people, and, critical for my work in the GCC, it actually understands regional cultural context. It knows the difference between an Emirati Kandura and a Saudi Thobe. That's not a trivial distinction; it's the difference between a credible ad and one that tells your audience you didn't bother to learn anything about them. I also use it for image editing, alongside Qwen Image Edit, which leads on precise text editing in images.

For local pipeline work, Flux 2 from Black Forest Labs (32 billion parameters, multi-reference, up to 4-megapixel resolution) remains excellent, especially in ComfyUI workflows where fine control over every generation parameter matters. Other strong open-source options include Z-Image from Alibaba, which delivers 95% of Flux's quality at 20% of the compute cost. Midjourney V7 excels at art direction and mood; its Omni Reference feature enables consistent character generation, which was the biggest pain point a year ago. And Adobe Firefly Image Model 5 offers something no other tool matches: IP indemnification on enterprise plans, meaning Adobe assumes the legal risk of copyright claims.

Where image generation shines:

- Product visualization: Placing products in environments, lighting conditions, and contexts that would cost thousands to photograph. This is table stakes now. Most e-commerce teams use it routinely.

- Concept art and mood boards: Compressing the ideation phase from days to hours. The single biggest workflow improvement I've seen AI deliver.

- Batch variations: Generating 50 versions of an approved concept for A/B testing, regional adaptation, or format variation. Repetitive production work that AI eats for breakfast.

- Background generation and extension: Generative Fill in Photoshop became the quiet workhorse of the industry: extending backgrounds, removing objects, quick compositing. It just works.

The caveat: Getting a single impressive image is easy. Getting 40 images that look like they belong to the same campaign, with consistent lighting, color palette, character appearance, and brand language? That requires skilled operators using ControlNet, IPAdapter, custom-trained LoRAs, and hours of curation. The tool is fast; the taste is slow.

Generate Short-Form Video

AI video crossed from "impressive demo" to "production tool" in the past year, and two platforms drove that shift.

Kling 3.0 (by Kuaishou, released February 2026) is the current benchmark leader. The numbers: 4K resolution at 60fps, clips up to 15 seconds, physics-accurate motion, and (this is the breakthrough) multi-shot storytelling with up to six connected shots maintaining character and scene consistency. Its Chain-of-Thought reasoning means the model plans complex scenes before generating them. The Motion Control update (March 2026) added 6-axis camera control and path drawing for object movement.

Runway Gen-4.5 holds the top position on Artificial Analysis benchmarks with 1,247 Elo points. It leads on cinematic quality: sharper visuals, smoother motion, temporal consistency that would have been unthinkable eighteen months ago.

What's production-viable: Social media content, product reveal clips, motion backgrounds, B-roll, animated product shots, style-transfer sequences, pre-visualization, and, increasingly, hero content for digital campaigns when paired with post-production.

Then there's Seedance 2.0 from ByteDance, technically impressive, especially for animation and stylized content. But it's a cautionary tale in how production viability can evaporate overnight. After Hollywood studios including Disney, Netflix, Paramount, and Sony threatened legal action over IP violations, ByteDance deployed content filters so aggressive that all realistic human face uploads are now blocked as reference images. The model is still powerful for animation and pure text-to-video, but for character-driven content with real faces? Forget it. You can't maintain character consistency across scenes if the model won't let you use a face reference. Technical capability means nothing if legal pressure or platform policy can yank it out from under you.

What's not production-viable: Anything requiring precise dialogue performance, specific hand interactions with objects, reliable text rendering in-frame, or more than about 15 seconds of continuous coherent footage. We're getting closer, but we're not there.

Clone and Translate Voices

This is perhaps the most underappreciated AI capability in advertising.

ElevenLabs Professional Voice Cloning produces output that is functionally indistinguishable from the source voice across 28 languages. You give it thirty minutes of high-quality audio, and it returns a voice model that maintains the speaker's unique characteristics (timbre, cadence, accent) in any language. Their Voice Remixing feature lets you adjust gender, accent, pacing, and style through natural language prompts.

For advertising production, this means a single spokesperson shoot can yield campaign audio in every market language without rebooking talent. That's a direct cost and timeline improvement that's easy to quantify.

HeyGen's Avatar IV takes it further: it interprets vocal emotion and generates corresponding micro-expressions, head tilts, and blink patterns. The lip-sync across 175+ languages is strong enough for corporate video, social content, and many advertising applications. Audio dubbing is now unlimited on their plans, which matters when you're producing at scale.

Accelerate Research, Writing, and Strategy

Claude and ChatGPT are useful for:

- First-draft copywriting: Headlines, body copy, social posts, email campaigns. Not finished copy, but getting from blank page to working draft in minutes instead of hours.

- Translation assistance: Not replacing native-speaker review, but producing first drafts in multiple languages that are dramatically better than old machine translation.

- Research synthesis: Summarizing competitive landscapes, market reports, and trend data. A real time-saver for strategy teams.

- Brainstorming at scale: Generating fifty tagline variations, exploring different tonal directions, pressure-testing concepts against different audiences.

The BCG/Harvard study from 2023 found that consultants using GPT-4 completed tasks 25% faster with 40% higher quality, but only for tasks within the AI's capability frontier. For tasks outside that frontier, AI users performed 23% worse. That finding has held up. LLMs make strong writers faster; they don't make weak writers strong.

Generate Scratch Music and Sound Design

Suno and Udio can generate full songs (vocals, instruments, arrangement) in specified genres within seconds. For production purposes, this is invaluable as temp music during the concepting and editing phases. Instead of licensing a placeholder track or describing the vibe you want, you generate it.

A major development: UMG has settled with Udio and is licensing its catalog for a new commercial music creation and streaming experience launching in 2026. This is the industry moving from litigation to collaboration, a significant signal. The Suno case remains in litigation, with summary judgment motions due in March 2026.

For scratch tracks and production music, AI is ready. For final broadcast music, the legal landscape is still settling, and the quality, while impressive, tends toward competent pastiche rather than real composition.

What AI Cannot Do

Maintain Consistency Without Expert Operators

This is the gap that separates AI demos from AI production, and it hasn't closed yet.

Generate a character in Midjourney. Beautiful. Now generate that same character from a different angle, in different lighting, wearing a different outfit, expressing a different emotion, and make it look like the same person across all of them. You'll spend hours using --cref parameters, IPAdapter references, LoRA conditioning, and manual curation to get results that a photographer achieves by pointing a camera at the same person twice.

Multi-shot storytelling in Kling 3.0 is a major step forward, but it's still limited to six connected shots, and "connected" doesn't mean "perfectly consistent." Skin tones shift. Accessories appear and disappear. The character ages three years between shot two and shot four.

For campaign work requiring a character or spokesperson across dozens of assets, traditional production is still more reliable. AI gets you 85% of the way, and the remaining 15% is where you decide whether to fix it manually or reshoot.

Understand Brand Guidelines

No AI tool has read your brand book and internalized it. Not one.

You can train a LoRA on brand assets. You can feed style references through IPAdapter. You can write exhaustive prompts specifying "Pantone 286 C, Gotham Bold, 60% negative space, premium minimalist aesthetic." And the model will interpret those instructions with the confident imprecision of an intern who heard the brief secondhand.

Brand stewardship requires understanding why a brand looks the way it does: the strategic thinking behind the guidelines, the hierarchy of elements, the rules that can bend and the ones that can't. AI follows instructions; it doesn't understand intent. Every AI-generated asset needs human review against the brand guidelines, and that review takes the same amount of time it always did.

Generate Reliable Text in Images and Video

Despite significant improvements (Flux 2 is legitimately good at text rendering in still images), text remains AI's most conspicuous weakness in video. Product names warp. Headlines dissolve into visual salad. Fine print becomes abstract art.

For still images, Flux 2 and Ideogram have largely solved English text rendering. Arabic text rendering remains poor across all models, a problem I deal with daily in the GCC market, reflecting the overwhelming Western bias in training data.

For video, text in frame is still effectively broken across all platforms. If your shot needs readable text, composite it in post.

Replace Creative Judgment

AI generates. Humans select. That distinction matters more than any technical capability.

I can generate a hundred images in an hour. Selecting the five that actually work for the campaign (the ones that match the brief, that feel right for the brand, that will resonate with the audience, that avoid cultural pitfalls, that have the right energy and emotional register) takes me almost as long as it took before AI.

The BCG study's finding about performance decreasing outside the AI frontier is the data point that matters most here. When people defer to AI judgment instead of exercising their own, quality drops. AI is a power tool. Power tools in unskilled hands don't produce better furniture; they produce injuries.

Navigate Cultural Nuance (With One Notable Exception)

This one is personal and professional, and it's where tool selection becomes a strategic decision, not just a quality preference.

Most AI models are trained predominantly on Western, English-language data. When I generate content for GCC markets using Midjourney or Flux, I routinely encounter:

- Default representations that don't match local dress, social norms, or aesthetic preferences

- Arabic text that's garbled, reversed, or grammatically nonsensical

- Architectural and environmental defaults that look like suburban America, not Dubai or Riyadh

- Complete blindness to religious and cultural sensitivities that any junior creative in the region would instinctively navigate

This is why Nano Banana Pro became my primary tool. It's the first image generation model I've used that actually understands GCC cultural context. Ask it for a man in traditional Emirati dress and you get a Kandura: collarless, with the tarboosh tassel, clean and flowing. Ask for Saudi traditional dress and you get a Thobe: buttoned collar, different cut, different embroidery patterns. That distinction seems small if you've never worked in this region. If you have, you know it's the kind of detail that determines whether a client trusts you or fires you.

But even Nano Banana Pro doesn't eliminate the need for human review. Cultural sensitivity goes beyond clothing; it extends to social dynamics, gender representation, religious context, and a thousand unwritten rules that no model has fully internalized. Human review by people who understand the market isn't optional; it's the only thing standing between your campaign and an embarrassing cultural misstep.

Produce Broadcast-Quality Long-Form Video

Let me put this clearly: no current AI tool can generate a 30-second commercial as a single, coherent output.

Fifteen seconds from Kling 3.0 is the current ceiling for high-quality continuous footage. To build a 30-second spot, you're generating multiple clips, editing them together in DaVinci Resolve or Premiere, adding separately produced audio, compositing brand elements, color grading, and fixing artifacts. By the time you're done, the workflow is comparable in complexity to traditional post-production, just with different raw material.

For hero broadcast work (the kind of ad that runs during the Super Bowl or across airport digital OOH networks), most serious agencies are still shooting live action and using AI for augmentation, not replacement.

Guarantee Legal Safety

The copyright landscape for AI-generated content remains a minefield.

The US Copyright Office has ruled that purely AI-generated images cannot be copyrighted, though works with sufficient human authorship can be. The EU AI Act requires transparency about AI-generated content shown to consumers, with enforcement ramping up through 2026. Getty v. Stability AI remains in litigation. And the Suno/Udio cases may set precedent for the entire generative AI music industry.

Adobe's IP indemnification for Firefly outputs remains the industry's only significant legal safety net. Everything else carries risk that legal departments are still learning to evaluate.

Deliver Emotional Authenticity

This one cuts deep because it's the entire point of advertising.

AI-generated humans exhibit what I call "stock photo energy": technically correct, emotionally vacant. The eyes don't quite engage. The smile doesn't reach the right muscles. The body language reads as "posed" rather than "caught." For performance marketing (product shots, format variations, banner ads), this doesn't matter. For brand campaigns that need to make someone feel something? It matters enormously.

Coca-Cola's AI-generated holiday ad (late 2024) demonstrated this precisely. The visuals were competent. The craft was evident. And the internet's overwhelming reaction was: "this feels dead." Not because the technology failed technically, but because emotional resonance requires something that generative models can't learn from data: the specific, idiosyncratic, beautifully imperfect way real humans exist in real moments.

For luxury brands, healthcare campaigns, and any work that depends on genuine human connection, AI-generated humans remain a risk. The uncanny valley has narrowed, but it hasn't closed. And audiences are getting better at detecting it, not worse.

Replace the Human Who Says "This One"

I've saved this for last because it's the limitation that matters most, and it's the one most easily overlooked.

Every AI workflow I run generates dozens, sometimes hundreds, of outputs. The tool that selects the right five from those hundreds, the ones that actually serve the brief, match the brand, respect the audience, and carry the right emotional weight, is a human being with experience, taste, and judgment.

That selection process is the creative act. It's not the generation. Every photographer in history understood this: you shoot 500 frames to get 12 for the contact sheet to get 1 for the cover. The camera didn't make the photograph. The eye behind it did.

AI has dramatically changed the front end of that equation: what we generate and how fast. It hasn't touched the back end: what we choose and why. And I don't see a path to it doing so, because choosing requires understanding purpose, and purpose lives outside the model.

The Honest Summary

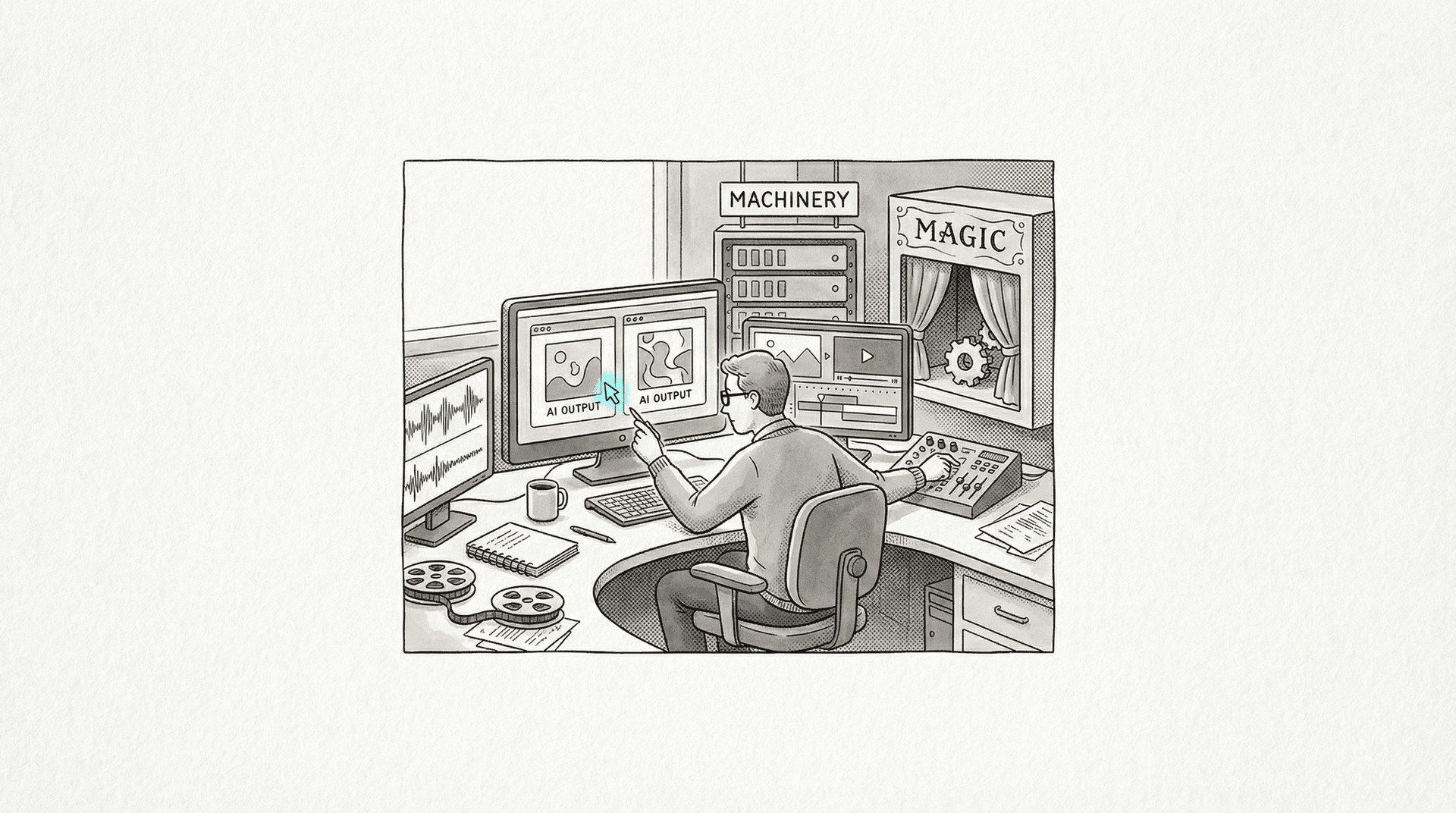

AI in creative production is real, useful, and improving fast. It is not magic, it is not free, and it is not a replacement for the people who know how to make good work.

The tools that deliver genuine value are the ones that solve specific production problems: batch variation, format adaptation, language localization, concept visualization, and the tedious mechanical work that used to consume creative hours without adding creative value.

The tools that overpromise are the ones that claim to replace the creative process itself. They can't. And the most expensive lesson an agency can learn is the difference between a tool that accelerates production and a tool that replaces judgment.

The question isn't "what can AI do?" The question is "what does this specific deliverable require, and does AI serve it?" Sometimes the answer is yes and it saves you days. Sometimes the answer is no and it wastes your morning. Knowing the difference before you start is what separates practitioners from enthusiasts.

Know the difference. Use accordingly.

Omar Kamel is AI Creative & Production Lead at Optix (Publicis Groupe), Dubai.

Mar 1, 2026

How AI Pipelines Actually Work in Ad Production

AI doesn't create ad campaigns—it compresses the distance between your concept and a visual others can react to. Here's exactly how production pipelines work, with all the friction.

Nov 19, 2025

The Tools That Earned Their Place

A year-end reckoning of which AI creative tools proved themselves in production and which disappeared. The winners share one trait: they solved real problems instead of chasing hype.

Aug 14, 2025

Who Trains the Next Art Director?

Agencies are automating the junior roles that build creative expertise, hollowing out the pipeline for future leaders. In ten years, nobody will know how to think anymore.